Embedded System Optimization: Latency, Throughput, Power, and Their Trade-Offs

Introduction

Embedded systems are everywhere from smartwatches and medical devices to automotive controllers and IoT sensors. While designing such systems, engineers do not just aim for functionality; they strive for optimal performance under strict constraints. Among the many performance parameters, three stand out as the most critical: latency, throughput, and power consumption. Understanding these concepts and more importantly, the trade-offs between them is essential for undergraduate students entering the field of embedded systems.

What is Embedded System Optimization?

Embedded system optimization is the process of improving system efficiency while working within limited resources such as memory, processing capability, and energy. Unlike general-purpose computing systems, embedded systems are designed for specific tasks, often in real-time environments.

A well-optimized system ensures:

- Fast response to inputs

- Efficient processing of tasks

- Minimal energy consumption

- Reliable and predictable behaviour

However, achieving all these simultaneously is rarely possible, leading to necessary trade-offs.

Key Performance Metrics in Embedded Systems

1) Latency: Measuring Responsiveness

- Latency refers to the time delay between an input and the corresponding output. It is a crucial parameter in systems where timing is critical.

- In practical terms, latency determines how quickly a system reacts. For example, in an automotive airbag system, the time between collision detection and airbag deployment must be extremely small.

- Lower latency improves responsiveness but often requires keeping the processor active and running at higher speeds.

2) Throughput: Measuring Processing Capacity

- Throughput defines the amount of work completed per unit time. It is especially important in systems that process continuous streams of data.

- For instance, a video processing embedded system must handle multiple frames per second. Higher throughput ensures smoother operation and better performance.

- Key characteristics of throughput:

a) Measured in ‘tasks/sec’ or ‘data/sec’.

b) Improved through parallelism and efficient algorithms.

c) Often requires higher computational power.

However, increasing throughput typically increases energy consumption.

3) Power Consumption: Measuring Energy Efficiency

- Power consumption refers to the amount of electrical energy used by the system. It is a critical factor in battery-operated devices such as wearable electronics and IoT nodes.

- Efficient power management ensures longer battery life and reduced heat generation.

- Common power optimization techniques include:

a)Using sleep and idle modes.

b)Reducing clock frequency.

c)Turning off unused peripherals.

d)Using interrupt-driven execution

- While reducing power is beneficial, it may negatively affect latency and throughput.

Interdependence of Latency, Throughput, and Power

These three metrics are deeply interconnected. Improving one often degrades another, creating a design challenge.

For example:

- Increasing processor speed reduces latency and improves throughput but increases power consumption. (Processor speed ↑, Latency ↓, Throughput ↑, Power consumption ↑).

- Reducing power by slowing the system increases latency and reduces throughput. (Power consumption ↓, Latency ↑, Throughput ↓).

- Increasing throughput using parallel processing increases power usage.

This relationship forces designers to carefully balance system requirements.

Trade-Off Analysis

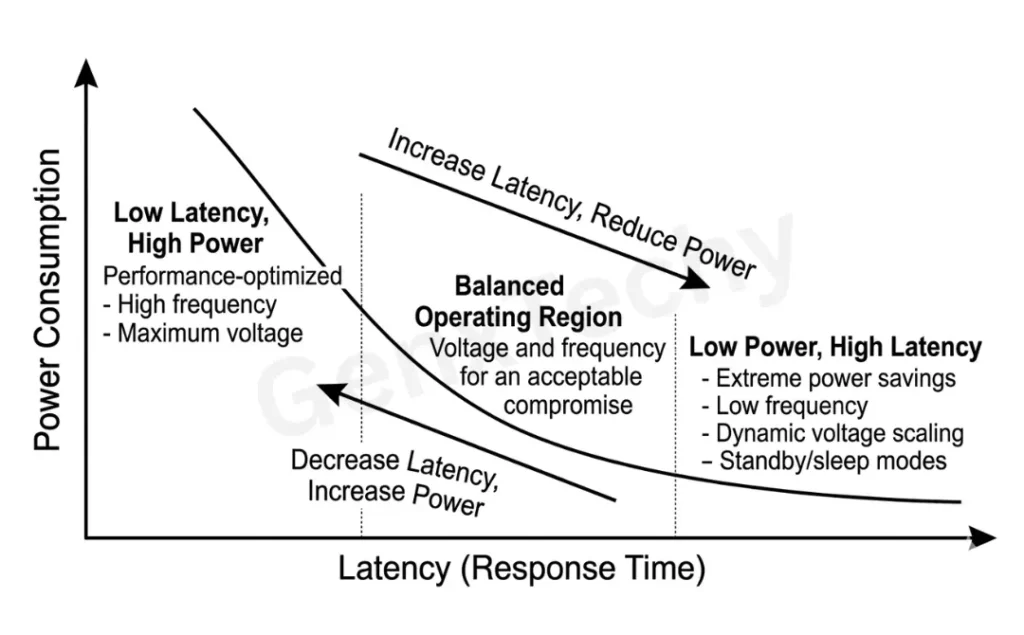

1) Latency vs Power Consumption

Reducing latency typically requires keeping the processor active and operating at high speed. This leads to increased power consumption.

- Low latency systems: Always active, fast response, high energy usage

- Low power systems: Sleep modes, slower response, energy efficient

Fig. 1: Latency vs Power Consumption

- Example:

A medical monitoring system prioritizes latency to detect emergencies instantly, even if it consumes more power.

Table 1: Latency vs Power Consumption

| Parameter | Latency | Power Consumption |

|---|---|---|

| Definition | Time delay between input and system response | Amount of electrical energy used by the system |

| Primary Goal | Minimize response time | Minimize energy usage |

| Unit of Measurement | Seconds (ms, µs, ns) | Watts (W), milliwatts (mW) |

| Focus Area | Speed and responsiveness | Energy efficiency and battery life |

| System Behavior | Faster execution, immediate response | Reduced activity, energy-saving modes |

| CPU Operation | High clock speed, continuous operation | Low clock speed, sleep/idle modes |

| Impact of Optimization | Reducing latency increases system responsiveness | Reducing power increases battery life |

| Effect on other Parameter | Lower latency → higher power consumption | Lower power → higher latency |

| Typical Techniques | Interrupt handling, faster processors, real-time scheduling | Sleep modes, DVFS, peripheral shutdown |

| Application Examples | Airbag systems, industrial control, robotics | Wearables, IoT sensors, portable medical devices |

| Design Priority | Critical in real-time systems | Critical in battery-operated systems |

| Trade-Off Nature | Achieved at the cost of increased energy usage | Achieved at the cost of slower response |

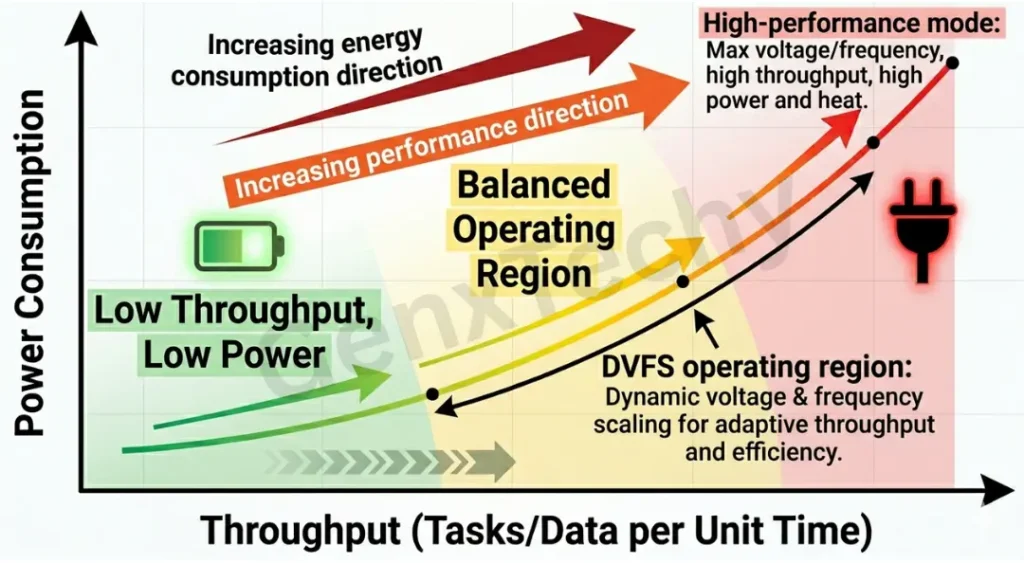

2) Throughput vs Power Consumption

Increasing throughput often involves processing more data in less time, which requires more computational resources and energy.

- High throughput systems: Parallel processing, high clock speed, more power consumption.

- Low power systems: Reduced processing rate, lower the throughput.

Fig. 2: Throughput vs Power Consumption

- Example:

A surveillance camera processing high-resolution video requires high throughput but consumes significant power.

Table 2: Throughput vs Power Consumption

| Parameter | Throughput | Power Consumption |

|---|---|---|

| Definition | Amount of data or number of tasks processed per unit time | Amount of electrical energy consumed by the system |

| Primary Goal | Maximize processing capacity | Minimize energy usage |

| Unit of Measurement | Tasks/sec, bits/sec, frames/sec | Watts (W), milliwatts (mW) |

| Focus Area | Performance and productivity | Energy efficiency and battery life |

| System Behavior | Continuous processing, high activity | Reduced activity, energy-saving modes |

| CPU Operation | High clock speed, parallel execution | Low clock speed, sleep/idle states |

| Impact of Optimization | Increasing throughput improves system performance | Reducing power extends system lifetime |

| Effect on Other Parameter | Higher throughput → higher power consumption | Lower power → reduced throughput |

| Typical Techniques | Parallel processing, DMA, pipelining, efficient algorithms | DVFS, sleep modes, peripheral shutdown |

| Application Examples | Video processing, networking devices, real-time analytics | Wearables, IoT sensors, portable embedded devices |

| Design Priority | Critical in data-intensive systems | Critical in battery-powered systems |

| Trade-Off Nature | Achieved at the cost of increased energy usage | Achieved at the cost of reduced processing capability |

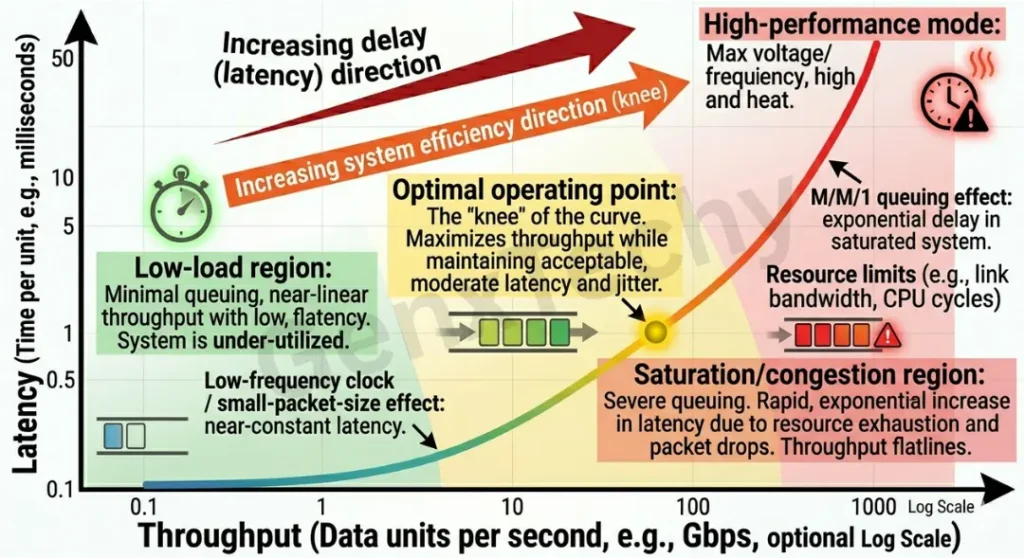

3) Latency vs Throughput

Latency and throughput are two fundamental performance metrics in embedded systems. While both relate to system performance, they represent different aspects of timing and processing capability, and optimizing one does not necessarily improve the other. Optimizing for latency does not always maximize throughput.

- Low latency systems: Immediate response, less efficient batch processing.

- High throughput systems: Batch processing, delayed individual response.

Fig. 3: Latency vs Throughput

- Example:

A data logging system processes data in batches, improving throughput but increasing latency for individual data points.

Table 3: Latency vs Throughput

| Parameter | Latency | Throughput |

|---|---|---|

| Definition | Time delay between input and system response | Amount of data or number of tasks processed per unit time |

| Primary Goal | Minimize response time | Maximize processing capacity |

| Unit of Measurement | Seconds (ms, µs, ns) | Tasks/sec, bits/sec, frames/sec |

| Focus Area | Responsiveness | Productivity and efficiency |

| System Behavior | Immediate handling of individual events | Continuous processing of multiple tasks |

| Processing Style | Event-driven, real-time execution | Batch processing or pipeline execution |

| Impact of Optimization | Reducing latency improves quick response | Increasing throughput improves total work done |

| Effect on Other Parameter | Lower latency may reduce throughput (less batching) | Higher throughput may increase latency (due to queuing/batching) |

| Typical Techniques | Interrupts, fast processors, real-time scheduling | Parallel processing, pipelining, buffering |

| Application Examples | Airbag systems, robotics control, industrial automation | Video streaming, data logging, network packet processing |

| Design Priority | Critical in real-time systems | Critical in data-intensive systems |

| Trade-Off Nature | Achieved by prioritizing immediate execution | Achieved by optimizing overall system efficiency |

Techniques to Balance Latency, Throughput, and Power

1. Dynamic Voltage and Frequency Scaling (DVFS)

Adjust CPU speed based on workload

High speed when needed, low otherwise

2. Interrupt-Driven Design

Avoid continuous polling

Reduces power while maintaining reasonable latency

3. Task Scheduling (RTOS)

Prioritize critical tasks

Balance responsiveness and workload

4. Hardware Acceleration

Use dedicated hardware (DSP, GPU, accelerators)

Improves throughput with lower energy per operation

5. Data Buffering

Batch non-critical tasks

Improves throughput without constant CPU usage

FAQs

What is embedded system optimization?

Embedded system optimization is the process of improving system performance while using limited resources such as memory, processing power, and energy efficiently. It ensures the system meets its performance requirements without unnecessary overhead.

What is latency in embedded systems?

Latency is the time delay between an input event and the system’s response. It is critical in real-time systems where immediate action is required, such as automotive safety or industrial control.

What is throughput?

Throughput refers to the amount of data processed or number of tasks completed per unit time. It indicates how efficiently a system handles workload over time.

What is power consumption in embedded systems?

Power consumption is the amount of electrical energy used by the system, usually measured in watts or milliwatts. It is especially important for battery-powered devices like IoT sensors and wearables.

Why can’t we optimize latency, throughput, and power simultaneously?

Because they are interdependent and often conflicting:

- Reducing latency requires higher processing speed → increases power

- Increasing throughput requires more computation → increases power

- Reducing power requires slowing down the system → increases latency and reduces throughput

What is the trade-off between latency and throughput?

- Low latency → faster response to individual tasks but may reduce overall throughput

- High throughput → more tasks processed overall but individual tasks may experience delay

Explain how trade-offs among latency, throughput, and power consumption influence embedded system optimization.

In embedded systems, optimization involves balancing three key performance metrics: latency, throughput, and power consumption. These parameters are interdependent, and improving one often degrades the others, leading to necessary trade-offs.

- Latency refers to the time delay between an input and the system response. Reducing latency requires high processing speed, continuous CPU activity, and immediate execution, which increases power consumption.

- Throughput is the amount of data or tasks processed per unit time. Increasing throughput typically involves parallel processing and higher clock speeds, which also leads to increased power usage.

- Power consumption represents the energy used by the system. Reducing power requires techniques like lowering clock frequency, using sleep modes, and minimizing processing activity, which increases latency and reduces throughput.

Key Trade-offs:

- Latency vs Power: Low latency requires high energy; low power increases response delay.

- Throughput vs Power: High throughput needs more computation → higher power consumption.

- Latency vs Throughput: Immediate processing (low latency) may reduce overall throughput, while batch processing (high throughput) increases latency.

How does power consumption affect system performance?

Reducing power often involves:

- Lower clock speeds

- Sleep modes

This leads to:

- Increased latency

- Reduced throughput

Thus, power optimization can impact performance negatively if not balanced properly.

What is DVFS in embedded systems?

Dynamic Voltage and Frequency Scaling (DVFS) is a technique that adjusts the processor’s voltage and frequency based on workload to balance performance and power consumption.

What is the role of buffering in throughput optimization?

Buffering helps in:

- Storing intermediate data

- Enabling continuous data flow

- Preventing CPU idle time

This improves overall system throughput.

Explain the reason for preferring an interrupt over polling in most of the embedded applications?

In embedded systems, interrupts are generally preferred over polling because they provide a more efficient and responsive way of handling events.

- In polling, the CPU continuously checks (loops over) a device or flag to see if an event has occurred. This leads to wastage of CPU time, as the processor remains busy even when no event is present. As a result, polling increases power consumption and reduces overall system efficiency.

- In contrast, interrupts allow the CPU to perform other tasks or remain in a low-power state until an event occurs. When the event happens, an interrupt signal immediately notifies the CPU, which then executes an Interrupt Service Routine (ISR) to handle it. This results in faster response time (lower latency) and better utilization of system resources.

Key Reasons for Preferring Interrupts:

- Efficient CPU utilization: CPU is free to perform other tasks instead of continuously checking

- Lower power consumption: Enables use of sleep or idle modes

- Better responsiveness: Immediate reaction to events

- Improved system performance: Supports multitasking and real-time behavior

Interrupt-driven systems are more efficient, responsive, and power-conscious compared to polling, making them the preferred choice in most embedded applications.